Reversing Windows ALPC Internals with Kernel Debugging

TL;DR: I wrote a custom ALPC server and client, attached a kernel debugger to a VM, and traced every syscall from user-mode through the kernel — proving how ports are created, how the three-port triangle forms during connection, how the double-buffer copy works at the

memcpylevel, and stumbling into undocumented behavior like direct delivery optimization and orphaned message blobs. No documentation was trusted — everything was verified live in WinDbg.

Why ALPC?

ALPC (Advanced Local Procedure Call) is the backbone of Windows IPC. Most local RPC calls use the ncalrpc transport which internally uses ALPC. COM activations and UAC prompts also flow through it. Microsoft documents the ALPC API surface, but the internal kernel implementation — the Alpcp* routines, message blobs, queue internals — is largely undocumented. There are a few excellent blog posts out there (shoutout to csandker.io), but I wanted to see the internals with my own eyes.

So I built a simple async ALPC server and client, attached WinDbg to a VM, and started stepping through the kernel.

The Setup

- ALPC Server — creates a named port

\RPC Control\ALPC_Reversing_Port, spawns aReceiveThreadthat loops onNtAlpcSendWaitReceivePort(blocking receive), accepts connections, and prints received messages. - ALPC Client — connects via

NtAlpcConnectPort, spawns aSendThreadthat fires 5 async messages (“Hello from client! message #1…” through #5), then exits. - WinDbg — kernel debugger attached to the VM. All breakpoints, disassembly, and structure dumps happen at ring 0.

- IDA Pro — static analysis of the ntdll stubs to find syscall numbers.

All the ALPC functions are undocumented — resolved at runtime via GetProcAddress on ntdll.dll.

Part 1: NtAlpcCreatePort — Building the Lobby

The server’s first move is creating a Connection Port — a named kernel object that clients will find and connect to.

// server.c line 209

status = NtAlpcCreatePort(&serverPort, &objAttr, &portAttr);

The User-Mode Stub Is Nothing

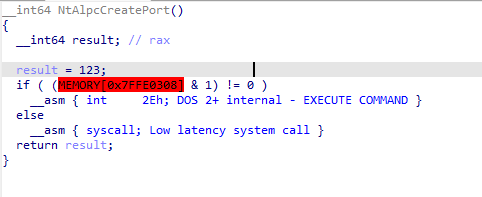

I opened ntdll.dll in IDA and decompiled NtAlpcCreatePort. It’s a 6-instruction trampoline:

- On this Windows build, loads

0x7B(123 decimal) into EAX — the System Service Number (this value changes per Windows version, build, and architecture) - Checks

KUSER_SHARED_DATA.SystemCallfor fast syscall vs legacyint 2Eh - Fires

syscall

No logic. No validation. Just “load the SSN and switch to ring 0.”

Proving the SSDT Dispatch

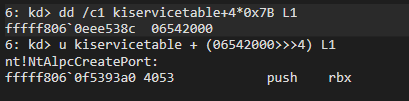

In WinDbg, I decoded the SSDT entry for syscall 0x7B:

dd /c1 kiservicetable+4*0x7B L1 → 06542000

u kiservicetable + (06542000>>>4) L1 → nt!NtAlpcCreatePort @ fffff806`0f5393a0

SSDT entries are encoded as relative offsets right-shifted by 4 bits. Decode: kiservicetable + (value >>> 4). Confirmed: syscall 0x7B → nt!NtAlpcCreatePort.

The Kernel Function Is Also a Wrapper

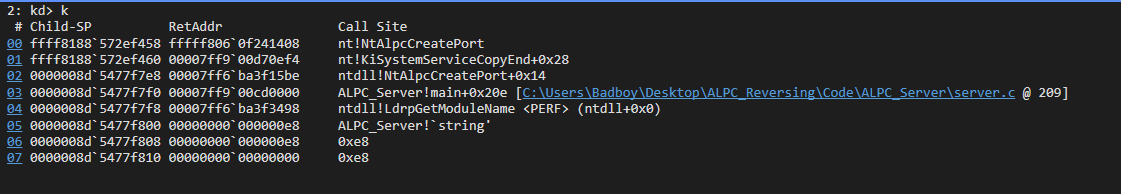

I set bp nt!NtAlpcCreatePort, ran the server, and hit the breakpoint. The call stack proves the full path:

ALPC_Server!main+0x20e [server.c @ 209]

→ ntdll!NtAlpcCreatePort+0x14

→ nt!KiSystemServiceCopyEnd+0x28

→ nt!NtAlpcCreatePort

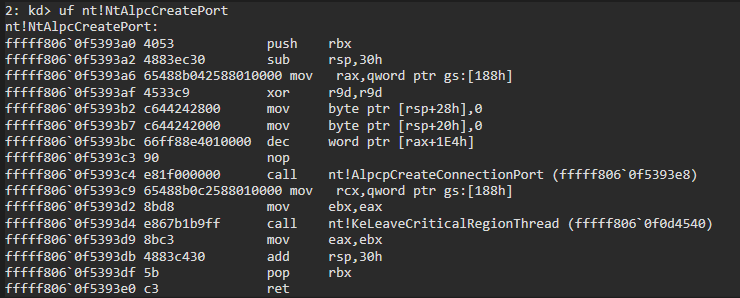

Disassembly of nt!NtAlpcCreatePort (uf):

Three things: enter critical region (disable APCs), call AlpcpCreateConnectionPort, leave critical region. That’s it. The real work is one layer deeper.

AlpcpCreateConnectionPort: The Recipe

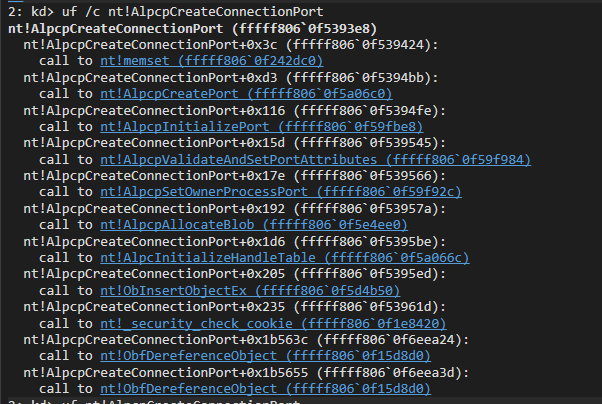

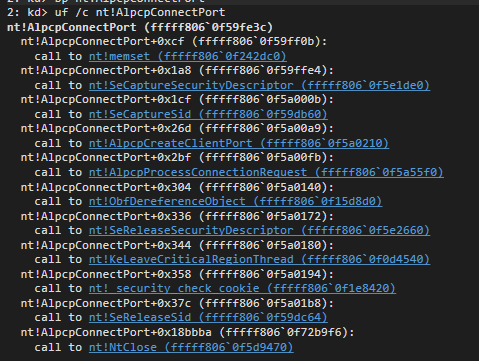

uf /c nt!AlpcpCreateConnectionPort reveals the complete port creation sequence:

| # | Function | What It Does |

|---|---|---|

| 1 | memset |

Zero the stack buffer |

| 2 | AlpcpCreatePort |

ObCreateObjectEx → allocates _ALPC_PORT in kernel pool |

| 3 | AlpcpInitializePort |

Sets up 5 message queues, semaphore, locks, port type = Connection |

| 4 | AlpcpValidateAndSetPortAttributes |

Copies user-mode ALPC_PORT_ATTRIBUTES into kernel object |

| 5 | AlpcpSetOwnerProcessPort |

Stamps OwnerProcess = current EPROCESS |

| 6 | AlpcpAllocateBlob |

Internal tracking structure |

| 7 | AlpcInitializeHandleTable |

For passing handles through ALPC messages |

| 8 | ObInsertObjectEx |

Registers the port in the Object Manager — now globally visible |

| 9 | ObfDereferenceObject (×2) |

Balance references |

Step 8 is the key — after ObInsertObjectEx, the port is a first-class kernel object at \RPC Control\ALPC_Reversing_Port. Any process that knows the name can connect.

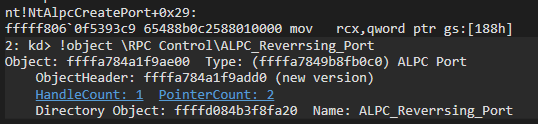

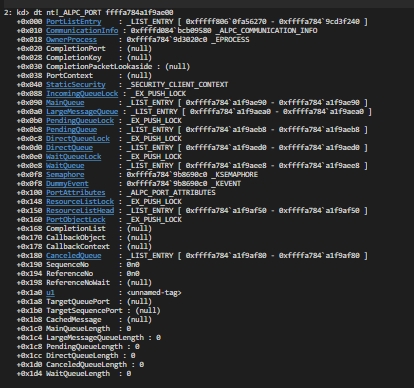

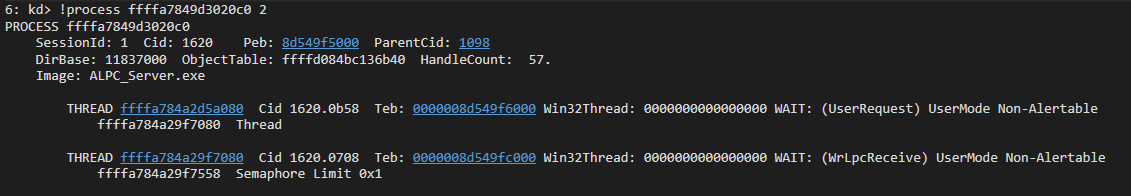

The Living Port Object

After creation, I looked up the port in the kernel namespace:

!object \RPC Control\ALPC_Reversing_Port

Object: ffffa784a1f9ae00 Type: ALPC Port

HandleCount: 1 PointerCount: 2

Name: ALPC_Reversing_Port

And dumped the full _ALPC_PORT structure (280+ bytes of internal state):

Key observations at this point:

- All 5 queues empty — MainQueue, PendingQueue, LargeMessageQueue, DirectQueue, CancelQueue all pointing to themselves (circular list = empty)

- OwnerProcess → our ALPC_Server.exe (verified via

dt nt!_EPROCESS) - TargetQueuePort = null — no client connected yet

- Semaphore present — the

ReceiveThreadwill block on this when waiting for messages

At this point the Connection Port is fully initialized and visible in the Object Manager namespace. A client can now discover and connect to it.

Part 2: NtAlpcConnectPort — The Three-Port Handshake

When the client connects, the kernel creates two more ports and links all three into a triangle. This is the most complex part of ALPC initialization.

// client.c line 158

status = NtAlpcConnectPort(&commPort, &portName, NULL, &portAttr,

ALPC_MSGFLG_SYNC_REQUEST, NULL,

&connectMsg.Header, &connectMsgSz,

NULL, NULL, &timeout);

The Three Ports

| Port | Created When | Created By | Role |

|---|---|---|---|

| Connection Port | Server calls NtAlpcCreatePort |

AlpcpCreateConnectionPort |

Named lobby — all clients connect through this |

| Client Communication Port | Client calls NtAlpcConnectPort |

AlpcpCreateClientPort |

Client’s private endpoint |

| Server Communication Port | Server calls NtAlpcAcceptConnectPort |

AlpcpAcceptConnectPort |

Server’s private endpoint for THIS specific client |

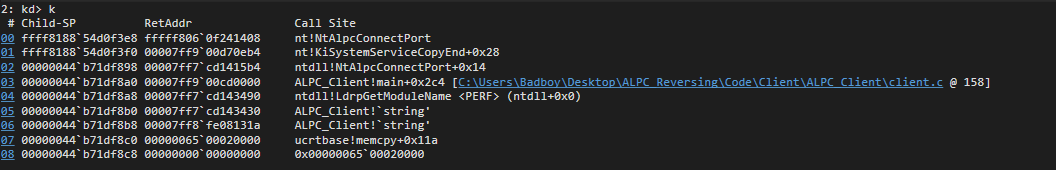

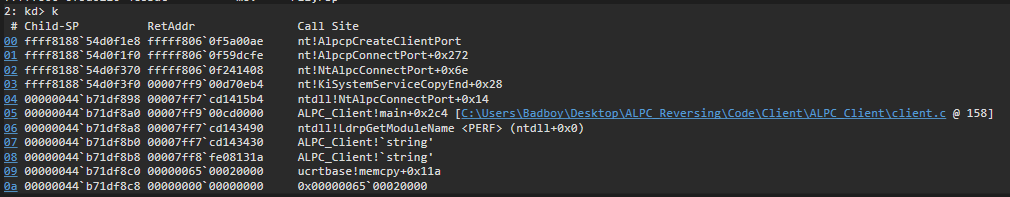

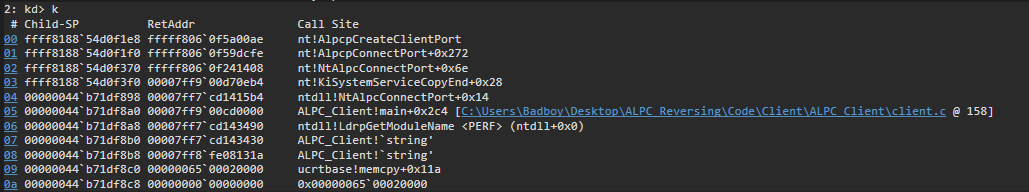

NtAlpcConnectPort → AlpcpConnectPort → Two Key Functions

The breakpoint fires from the client:

ALPC_Client!main+0x2c4 [client.c @ 158]

→ ntdll!NtAlpcConnectPort+0x14

→ nt!NtAlpcConnectPort

Just like the create path, NtAlpcConnectPort is a wrapper. AlpcpConnectPort does the work through two key calls:

AlpcpConnectPort

├─ AlpcpCreateClientPort ← builds the Client Communication Port

└─ AlpcpProcessConnectionRequest ← handshake with server

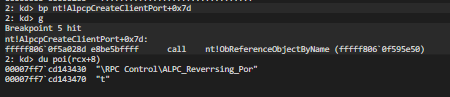

AlpcpCreateClientPort: Finding the Server

This function has 20+ internal calls. The critical ones:

-

ObReferenceObjectByName— looks up\RPC Control\ALPC_Reversing_Portin the Object Manager namespace. This is how the client FINDS the server’s Connection Port.I set a breakpoint right at the call site and dumped the name string:

du poi(rcx+8) \RPC Control\ALPC_Reversing_Port -

AlpcpCheckConnectionSecurity— security gate. Checks the client’s token against the port’s security descriptor. If denied, connection fails withSTATUS_ACCESS_DENIED. -

AlpcpCreatePort→AlpcpInitializePort— allocates and initializes a NEW_ALPC_PORT. This becomes the Client Communication Port. -

ObInsertObjectEx— registers it, returns a HANDLE to the client. -

AlpcpSetOwnerProcessPort— stampsOwnerProcess = ALPC_Client.exe.

At this point the Client Communication Port exists but isn’t linked to anything yet. The server doesn’t even know about the client.

AlpcpProcessConnectionRequest: The Handshake Dance

This is where it gets interesting. The function:

- Builds a CONNECTION_REQUEST message — wraps our connect payload in an

LPC_CONNECTION_REQUEST - Dispatches it to the Connection Port —

AlpcpDispatchConnectionRequestenqueues on MainQueue, signals the Semaphore - Blocks the client thread —

AlpcpReceiveSynchronousReplyputs the client to sleep

Now the server’s ReceiveThread wakes up and sees LPC_CONNECTION_REQUEST. Our server.c calls:

// server.c line 109

NtAlpcAcceptConnectPort(&commPort, serverPort, ALPC_MSGFLG_NONE,

NULL, NULL, NULL, &recvMsg.Header, NULL, TRUE);

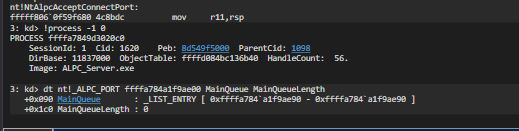

AlpcpAcceptConnectPort: Creating the Third Port

This hits NtAlpcAcceptConnectPort in the server’s context:

The internal function AlpcpAcceptConnectPort has ~40 calls organized in 5 phases:

Phase 1 — Validate: Find the original connection request in the queue, validate it’s legitimate.

Phase 2 — Create Server Communication Port: AlpcpCreatePort → AlpcpInitializePort → AlpcpSetOwnerProcessPort (owner = ALPC_Server.exe).

Phase 3 — Link Everything Together: This is where the triangle forms:

- Server Comm Port’s

TargetQueuePort→ Client Communication Port - Client Comm Port’s

TargetQueuePort→ Connection Port - A shared

_ALPC_COMMUNICATION_INFOstructure links both comm ports - The pair is inserted into the Connection Port’s

CommunicationsList

Phase 4 — Wake the Client: AlpcpDispatchMessage sends the accept reply. The client’s AlpcpReceiveSynchronousReply wakes up with STATUS_SUCCESS.

Phase 5 — Cleanup.

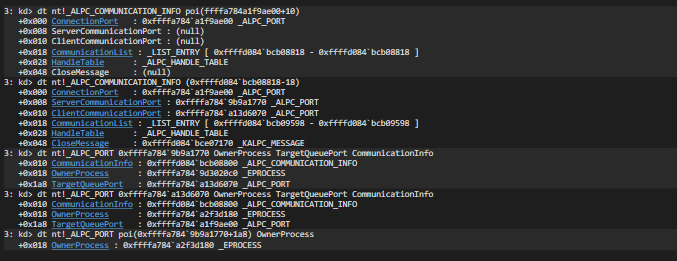

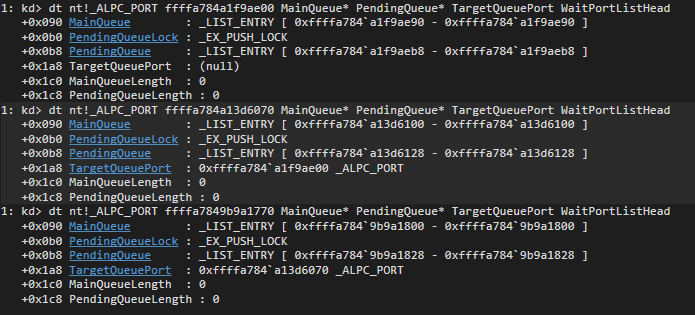

The Architecture After Connection

Dumping _ALPC_COMMUNICATION_INFO proves the full triangle:

Shared CommunicationInfo:

ConnectionPort = ffffa784a1f9ae00

ServerCommunicationPort = ffffa7849b9a1770 (Owner: ALPC_Server.exe)

ClientCommunicationPort = ffffa784a13d6070 (Owner: ALPC_Client.exe)

The TargetQueuePort Asymmetry — The Big Discovery

One interesting observation during tracing was the asymmetric use of TargetQueuePort. It is NOT symmetrical:

Client Comm Port ──TargetQueuePort──► Connection Port (MULTIPLEXED)

Server Comm Port ──TargetQueuePort──► Client Comm Port (DIRECT)

Why? The Connection Port has a single shared queue. All clients’ messages funnel into it. The server’s ReceiveThread pulls from this one queue and can serve hundreds of clients. This is a massive improvement over legacy LPC, which spawned a new thread per client.

But replies need to be targeted — when the server replies to client A, it shouldn’t go to client B. So the Server Communication Port points directly at the Client Communication Port. Direct delivery, no multiplexing.

┌─ Client A sends ──TargetQueuePort──┐

│ ▼

Connection Port ◄────────────────────────── MainQueue (shared)

(server waits here) ▲

├─ Client B sends ──TargetQueuePort──┘

│ ▲

└─ Client C sends ──TargetQueuePort──┘

Server reads message, determines sender via PORT_MESSAGE.ClientId

→ replies through Server Comm Port for THAT client

→ TargetQueuePort → goes directly to THAT Client Comm Port only

Note: This diagram is intentionally simplified. The actual message routing involves

ALPC_PORT.Queuestructures and additional internal logic, butTargetQueuePortcaptures the essential asymmetry.

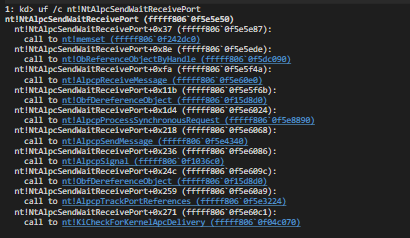

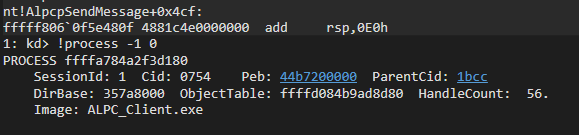

Part 3: NtAlpcSendWaitReceivePort — The Double Buffer

The connection is established. Three ports exist. Now the client sends messages and we trace every byte through the kernel.

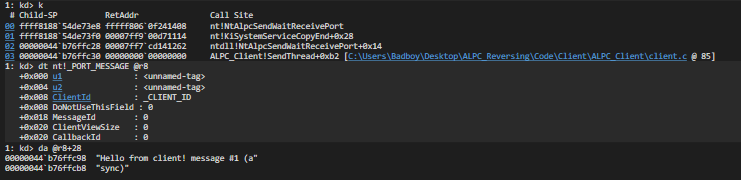

// client.c — SendThread, line 85

NtAlpcSendWaitReceivePort(g_hPort, ALPC_MSGFLG_RELEASE_MESSAGE,

(PPORT_MESSAGE)&sendMsg,

NULL, NULL, NULL, NULL);

ALPC_MSGFLG_RELEASE_MESSAGE tells the kernel that the sender’s message buffer can be released after the send completes. Combined with RecvMsg = NULL (no receive buffer provided), this effectively makes it a fire-and-forget pattern.

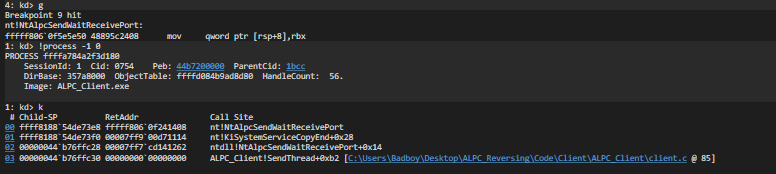

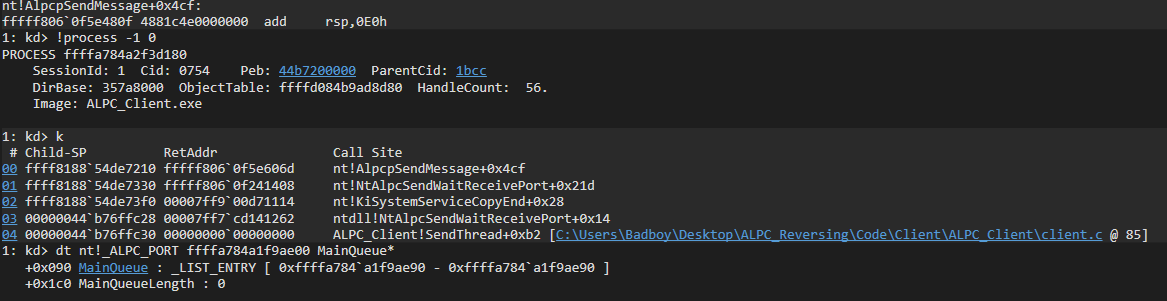

One Syscall, Two Paths

NtAlpcSendWaitReceivePort handles both sending AND receiving. The first hit is the server entering its blocking wait:

ALPC_Server!ReceiveThread+0xb1 [server.c @ 68]

→ nt!NtAlpcSendWaitReceivePort

Server passes SendMsg = NULL, RecvMsg = &buffer — pure receive. It blocks on the Connection Port’s Semaphore.

The second hit is the client sending its first message:

ALPC_Client!SendThread+0xd6 [client.c @ 85]

→ nt!NtAlpcSendWaitReceivePort

Proving What’s in the Client’s Buffer

Before the kernel touches anything, we dump the client’s send buffer:

da @r8+28

"Hello from client! message #1 (async)"

This string lives in client user-space memory. It’s about to be copied into the kernel.

All Queues Empty Before Send

We snapshot all three ports’ queues:

Connection Port: MainQueue = 0, PendingQueue = 0

Client Comm Port: MainQueue = 0, PendingQueue = 0

Server Comm Port: MainQueue = 0, PendingQueue = 0

Clean slate. Now we send.

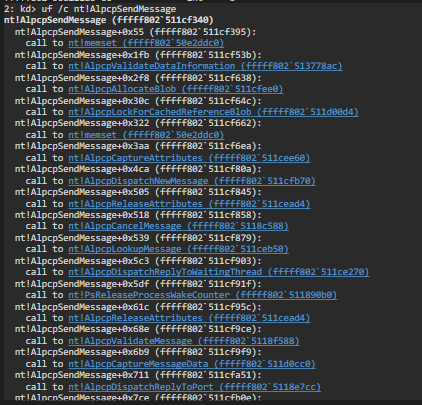

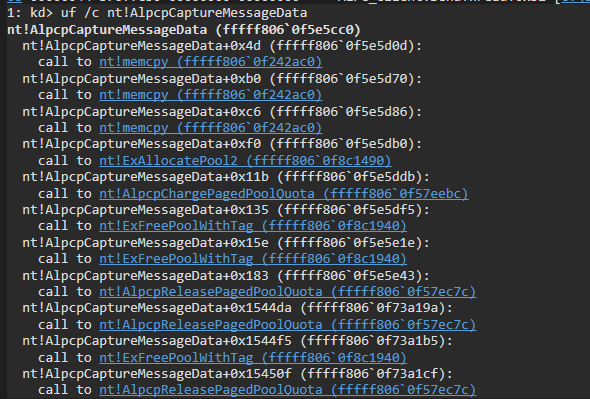

The Send Path: AlpcpSendMessage → AlpcpCaptureMessageData

uf /c nt!AlpcpSendMessage reveals the full send chain:

AlpcpSendMessage

├─ AlpcpAllocateBlob ← allocate kernel buffer

├─ AlpcpValidateMessage ← validate PORT_MESSAGE fields

├─ AlpcpCaptureMessageData ← FIRST COPY (client → kernel)

├─ AlpcpDispatchNewMessage ← route to target port

└─ AlpcpCompleteDispatchMessage

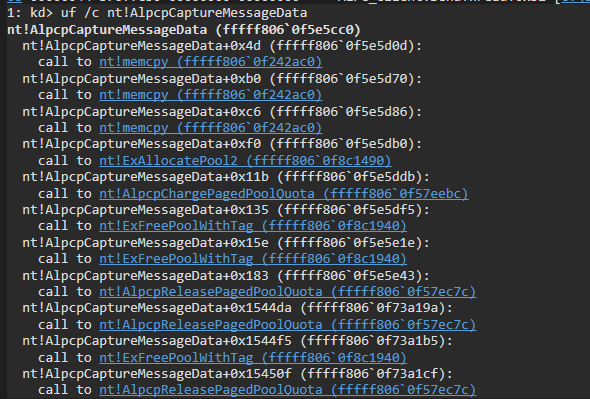

AlpcpCaptureMessageData: Three memcpy Calls

This is where the first copy happens. uf /c:

AlpcpCaptureMessageData:

call nt!memcpy ← PORT_MESSAGE header (0x28 bytes)

call nt!memcpy ← payload data

call nt!memcpy ← extra data (attributes)

call nt!ExAllocatePool2 ← allocate kernel pool

The full call stack when this fires:

SendThread → NtAlpcSendWaitReceivePort → AlpcpSendMessage

→ AlpcpDispatchNewMessage → AlpcpCompleteDispatchMessage

→ AlpcpCaptureMessageDataSafe → AlpcpCaptureMessageData (3× memcpy)

Direct Delivery: MainQueue Stays at Zero

After the send completes:

MainQueueLength : 0 ← STILL ZERO

PendingQueueLength : 1 ← message tracked here

The message never touched MainQueue. If the server thread is already blocked in NtAlpcSendWaitReceivePort, the kernel may bypass queue insertion and deliver the message directly to the waiting thread. This is an undocumented fast path:

SLOW PATH (no thread waiting):

message → MainQueue → signal Semaphore → thread wakes → dequeues

FAST PATH (thread already waiting):

message → deliver directly to waiting thread → thread wakes immediately

_KALPC_MESSAGE: The Kernel Blob

The _KALPC_MESSAGE structure in kernel pool:

dt nt!_KALPC_MESSAGE ffffd084b601cca0

OwnerPort : Client Comm Port (who sent it)

PortQueue : Connection Port (which queue it's in)

CommunicationInfo : shared pair structure

ExtensionBuffer : PORT_MESSAGE + payload at offset +0f0

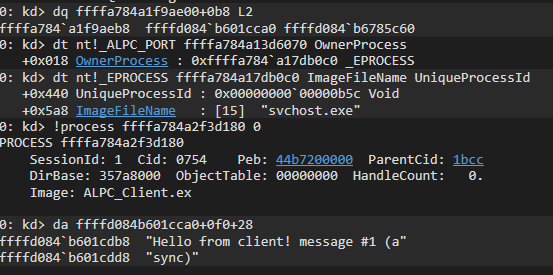

First Copy

da ffffd084b601cca0+0f0+28

"Hello from client! message #1 (async)"

The client’s message string is sitting in kernel pool memory. Address ffffd084b601cdb8 — this is nonpaged pool allocated by ExAllocatePool2 during AlpcpCaptureMessageData. First copy: proven.

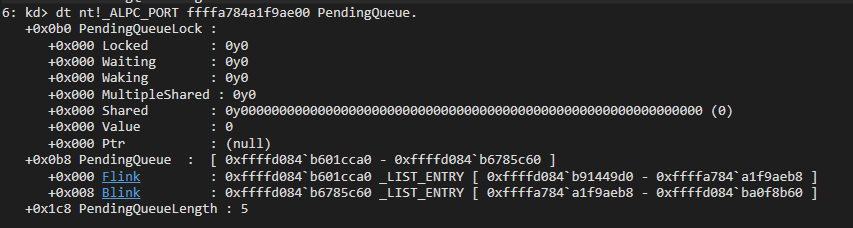

Walking All 5 Messages in PendingQueue

The client fires 5 messages. After all sends, PendingQueueLength = 5:

The PendingQueue is a circular doubly-linked list. We walk it by following Flink pointers:

| # | Kernel Address | Payload |

|---|---|---|

| 1 | ffffd084b601cca0 |

"Hello from client! message #1 (async)" |

| 2 | ffffd084b91449d0 |

"Hello from client! message #2 (async)" |

| 3 | ffffd084bca5eca0 |

"Hello from client! message #3 (async)" |

| 4 | ffffd084ba0f8b60 |

"Hello from client! message #4 (async)" |

| 5 | ffffd084b6785c60 |

"Hello from client! message #5 (async)" |

Five separate _KALPC_MESSAGE blobs in kernel pool. Each with a full copy of the client’s message. Each linked into a circular list hanging off the Connection Port’s PendingQueue.

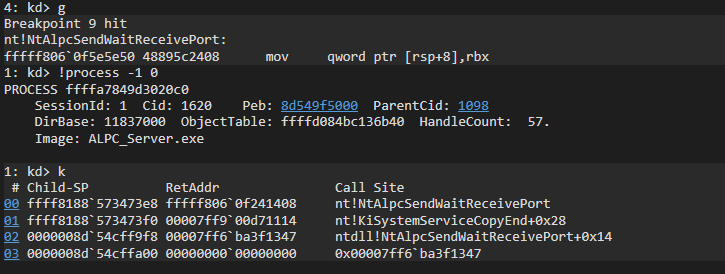

The Receive Path: AlpcpReadMessageData — The Second Copy

When the server calls NtAlpcSendWaitReceivePort to receive, the kernel calls AlpcpReceiveMessage:

AlpcpReceiveMessage

├─ ProbeForWrite ← validate server buffer is writable

├─ AlpcpReceiveMessagePort ← dequeue message

├─ AlpcpReadMessageData ← SECOND COPY (kernel → server)

├─ AlpcpExposeAttributes ← expose message attributes

└─ AlpcpUnlockBlob ← release reference

uf /c nt!AlpcpReadMessageData:

AlpcpReadMessageData:

call nt!memcpy ← copy PORT_MESSAGE header (kernel → server buffer)

call nt!memcpy ← copy payload data (kernel → server buffer)

Two memcpy calls. The mirror of AlpcpCaptureMessageData. The kernel copies from the _KALPC_MESSAGE blob into the server’s user-space receive buffer.

The server printed all 5 messages in its console. Second copy: proven.

The Complete Double-Buffer Proof

Client buffer (user-space) Kernel pool Server buffer (user-space)

"Hello from client!..." _KALPC_MESSAGE blob "Hello from client!..."

│ │ ▲

│ AlpcpCaptureMessageData │ AlpcpReadMessageData │

└──── 3× memcpy ──────────► ──└──── 2× memcpy ───────────────┘

Both copies proven at the memcpy level with live kernel debugging. The sender and receiver never share a buffer. The kernel is always the intermediary.

Part 4: The Orphaned Message Discovery

This was completely unexpected. After the server printed all 5 messages and the client exited, I checked PendingQueue:

dt nt!_ALPC_PORT ffffa784a1f9ae00 PendingQueueLength

+0x1c8 PendingQueueLength : 5

Still 5. The server already read them. The client is dead. But 5 message blobs are sitting in kernel pool.

Copy ≠ Free

AlpcpReadMessageData copies data into the server’s buffer but does NOT free the _KALPC_MESSAGE blob. The kernel keeps it as a “receipt” — in a normal request-reply pattern, the server would reply and the kernel would match the reply to the original message, THEN free the blob:

NORMAL (request-reply):

Client sends → PendingQueue = 1

Server replies → kernel matches reply to blob → PendingQueue = 0

FIRE-AND-FORGET (our code):

Client sends → PendingQueue = 1

Server reads (no reply) → PendingQueue = STILL 1

Blob stays until port destruction

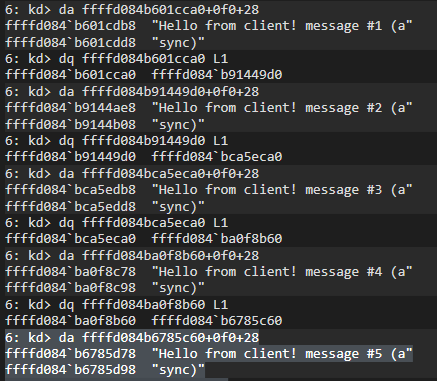

OwnerPort Goes NULL

After the client process exits:

dt nt!_KALPC_MESSAGE ffffd084b601cca0 OwnerPort

+0x018 OwnerPort : (null)

The kernel sets OwnerPort to NULL during client port teardown. Why? Because the Client Communication Port’s memory gets recycled:

dt nt!_ALPC_PORT ffffa784a13d6070 OwnerProcess

+0x018 OwnerProcess : 0xffffa784`a17db0c0 → "svchost.exe"

Our old Client Communication Port address now belongs to a completely different ALPC port for svchost.exe! The pool allocator freed the memory when the client exited and gave the same address to a new allocation. If the kernel hadn’t set OwnerPort to NULL, we’d have a use-after-free vulnerability.

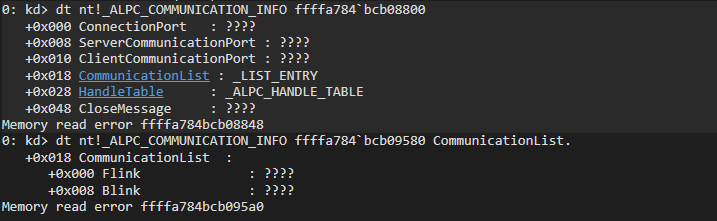

CommunicationInfo — Completely Destroyed

dt nt!_ALPC_COMMUNICATION_INFO ffffa784`bcb08800

ConnectionPort : ????

ServerCommunicationPort : ????

ClientCommunicationPort : ????

→ Memory read error — FREED

The shared _ALPC_COMMUNICATION_INFO that bound the comm port pair — gone. Server Communication Port — destroyed. Client Communication Port — recycled to svchost. The entire communication infrastructure was torn down.

Only two things survive: the Connection Port (server is still running) and the 5 orphaned message blobs parked in PendingQueue with OwnerPort = NULL.

The Full Message Lifecycle

┌─ ALIVE ──────────────────────────────────────────────────────┐

│ AlpcpCaptureMessageData → _KALPC_MESSAGE blob created │

│ OwnerPort = Client Comm Port, PortQueue = Connection Port │

│ PendingQueue: 0 → 1 │

└──────────────────────────────────────────────────────────────┘

│

┌─ DELIVERED ──────────────────────────────────────────────────┐

│ AlpcpReadMessageData → data copied to server buffer │

│ Server prints message ✓ │

│ BUT blob stays in PendingQueue (waiting for reply) │

└──────────────────────────────────────────────────────────────┘

│

┌─ ORPHANED ───────────────────────────────────────────────────┐

│ Client process terminates │

│ Client Comm Port destroyed, OwnerPort NULLed │

│ CommunicationInfo freed, Server Comm Port destroyed │

│ Port memory recycled by pool allocator │

│ Blobs remain in Connection Port's PendingQueue │

└──────────────────────────────────────────────────────────────┘

│

┌─ FREED ──────────────────────────────────────────────────────┐

│ Server process terminates │

│ Connection Port destroyed by AlpcpDeletePort │

│ Walks PendingQueue → frees all remaining blobs │

│ PendingQueue: 5 → 0. Everything cleaned up. │

└──────────────────────────────────────────────────────────────┘

Summary of Discoveries

What We Proved

-

ntdll stubs are trivial — 6 instructions, load SSN, fire syscall. Zero logic in user-mode.

-

Kernel Nt* functions are wrappers —

NtAlpcCreatePortenters a critical region and delegates toAlpcpCreateConnectionPort. One call. -

Port creation is a 10-step recipe —

memset→AlpcpCreatePort→AlpcpInitializePort→ … →ObInsertObjectEx(visible to the system). -

Connection is a three-way handshake — client creates Client Comm Port, dispatches connection request, sleeps. Server wakes, creates Server Comm Port, links both, wakes client.

-

TargetQueuePort asymmetry — client → Connection Port (multiplexed, all clients into one queue), server → Client Comm Port (targeted reply). This is how one

ReceiveThreadserves many clients. -

Double-buffer is two explicit memcpy stages —

AlpcpCaptureMessageData(3× memcpy, client → kernel),AlpcpReadMessageData(2× memcpy, kernel → server). Sender and receiver never share memory. -

Direct delivery optimization — when a thread is already waiting in WaitQueue, the kernel bypasses MainQueue entirely and delivers directly. The message never touches the queue.

-

PendingQueue tracks messages that are still part of an active communication context — messages stay even after the receiver reads them. Only removed on reply or port destruction.

-

Fire-and-forget leaks PendingQueue — unreplied messages become orphaned blobs with

OwnerPort = NULL. They persist until the Connection Port is destroyed. Production servers should reply to drain the queue. -

Pool memory recycling is immediate — freed port addresses get reused by unrelated ALPC ports within seconds. The kernel sets dangling pointers to

NULLto prevent use-after-free.

Interesting Observations

During tracing, several interesting behaviors appeared that are not publicly documented:

- Direct delivery optimization — when a server thread is already waiting, the kernel skips queue insertion entirely and delivers the message directly to the blocked thread

- Message blobs surviving after delivery —

AlpcpReadMessageDatacopies the data but does not free the_KALPC_MESSAGEblob; it persists until message completion (reply) or port destruction - OwnerPort set to NULL to prevent use-after-free — when a client port is destroyed, the kernel proactively clears references in orphaned message blobs

- Pool address reuse by unrelated ALPC ports — freed port memory is immediately recycled by the pool allocator, and the same virtual address may be assigned to a completely different ALPC port within seconds

These behaviors reveal important implementation details about how the Windows kernel manages ALPC message lifecycles and port memory safety.